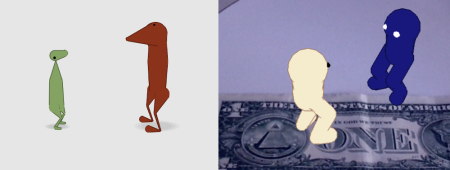

The following creepy humanoids provide ample reason to fear artificial intelligence:

This is just one example of virtual humans that would be appropriate in a horror movie. There are many others. Here’s my question: why are there so many creepy humans in computer animation?

The uncanny problem is not necessarily due to the AI itself: it’s usually the result of failed attempts at generating appropriate body language for the AI. As I point out in the Gestural Turing Test: “intelligence has a body”. And nothing ruins a good AI more than terrible body language. And yes, when I say “body language”, I include the sound, rhythm, timbre, and prosody of the voice (which is produced in the body).

The uncanny problem is not necessarily due to the AI itself: it’s usually the result of failed attempts at generating appropriate body language for the AI. As I point out in the Gestural Turing Test: “intelligence has a body”. And nothing ruins a good AI more than terrible body language. And yes, when I say “body language”, I include the sound, rhythm, timbre, and prosody of the voice (which is produced in the body).

Simulated body language can steer clear of the uncanny valley with some simple rules of thumb:

1. Don’t simulate humans unless you absolutely have to.

2. Use eye contact between characters. This is not rocket science, folks.

3. Cartoonify. Less visual detail leaves more to the imagination and less that can go wrong.

4. Do the work to make your AI express itself using emotional cues. Don’t be lazy about it.

Shameless plug: Wiglets are super-cartoony non-humanoid critters that avoid the uncanny valley, and use emotional cues, like eye contact, proxemic movements, etc.

These videos show how wiglets move and act.

Artificial Life was invented partly as a way to get around a core problem of AI: humans are the most sophisticated and complex animals on Earth. Simulating them in a realistic way is nearly impossible, because we can always detect a fake. Getting it wrong (which is almost always the case) results in something creepy, scary, clumsy, or just plain useless.

Artificial Life was invented partly as a way to get around a core problem of AI: humans are the most sophisticated and complex animals on Earth. Simulating them in a realistic way is nearly impossible, because we can always detect a fake. Getting it wrong (which is almost always the case) results in something creepy, scary, clumsy, or just plain useless.

In contrast, simulating non-human animals (starting with simple organisms and working up the chain of emergent complexity) is a pragmatic program for scientific research – not to mention developing consumer products, toys, games, and virtual companions.

We’ll get to believable artificial humans some day.

Meanwhile…

I am having a grand old time making virtual animals using simulated physics, genetics, and a touch of AI. No lofty goals here. With a good dose of imagination (people have plenty of it), it only takes a teaspoon of AI (crafted just right) to make a compelling experience – to make something feel and act sentient. And with the right blend of body language, responsiveness, and interactivity, imagination can fill-in all the missing details.

Alan Turing understood the role of the observer, and this is why he chose a behaviorist approach to asking the question: “what is intelligence?”

Artificial Intelligence is founded on the anthropomorphic notion that human minds are the pinnacle of intelligence on Earth. But hubris can sometimes get in the way of progress. Artificial Life – on the other hand, recognizes that intelligence originates from deep within ancient Earth. We are well-advised to understand it (and simulate it) as a way to better understand ourselves, and how we came to be who we are.

Artificial Intelligence is founded on the anthropomorphic notion that human minds are the pinnacle of intelligence on Earth. But hubris can sometimes get in the way of progress. Artificial Life – on the other hand, recognizes that intelligence originates from deep within ancient Earth. We are well-advised to understand it (and simulate it) as a way to better understand ourselves, and how we came to be who we are.

It’s also not a bad way to avoid the uncanny valley.

Posted by Jeffrey Ventrella

Posted by Jeffrey Ventrella

Puppeteering is the art of making something come to life, whether with strings (as in a marionette), or with your hand (as in a muppet). The greatest puppeteers know how to make the most expressive movement with the fewest strings.

Puppeteering is the art of making something come to life, whether with strings (as in a marionette), or with your hand (as in a muppet). The greatest puppeteers know how to make the most expressive movement with the fewest strings.

And that means people can stop having to fly around the world and burning fossil fuels in order to have 2-hour-long business meetings. And that means reducing our carbon footprint. And that means we might have a better chance of not pissing-off Mother Earth to the degree that she has a spontaneous fever and shrugs us off like pesky fleas.

And that means people can stop having to fly around the world and burning fossil fuels in order to have 2-hour-long business meetings. And that means reducing our carbon footprint. And that means we might have a better chance of not pissing-off Mother Earth to the degree that she has a spontaneous fever and shrugs us off like pesky fleas.